Rate Limiting Algorithms in depth

Introduction

In today’s world, rate limiting has become essential for system stability and fairness. Whether you’re running an AI SaaS platform and want to keep free trial users in check, or protecting your API server from resource starvation, a good rate limiting strategy ensures your service stays reliable under pressure. In this article, I’ll walk you through the most widely used algorithms that power rate limiting in modern systems.

We’ll explore each algorithm in depth, covering its pros and cons. Let’s dive in

Token Bucket Algorithm

Imagine this: we have a bucket, and every T seconds a token is dropped into it. Each incoming request needs to grab a token from the bucket in order to pass through. If the bucket is empty, requests have to wait until new tokens arrive. Because the bucket can hold multiple tokens, clients are allowed to make short bursts of requests — but over time, the rate is capped by how quickly tokens refill.

That’s token bucket algorithm in a nutshell.

When the bucket is empty, incoming requests can’t proceed. Most implementations reject them immediately (think 429 Too Many Requests). Others choose to queue requests until tokens are available, but that’s not part of the core Token Bucket algorithm — it’s an extra design decision depending on whether you value strict protection or smoother user experience.

Pros:

Simple concept to grasp and implement

Flexible as you can control the refill speed and bucket capacity

Throughput of an API is capped by the token refill rate

Cons:

Burst allowance can be risky: If many tokens are available (e.g., 50), the algorithm allows all 50 requests in an instant — which can look like a traffic spike (“thundering herd”) and overload downstream systems.

Stateful per client: Requires tracking a bucket for every user/client/IP, which can add memory overhead at scale.

Queuing isn’t built-in: If you want to delay rather than drop requests, you need extra queuing infrastructure.

Leaky Bucket Algorithm

This algorithm approaches rate limiting from a different perspective. Imagine the same bucket, but this time it has a tiny hole at the bottom, leaking water at a constant rate. Each incoming request is like a drop of water added to the bucket. As long as there’s space, the request goes in and eventually drips out at the steady leak rate. But once the bucket is full, any additional drops (requests) simply spill over and get dropped.

Code wise the steady flow is enforced by a timer (or tick) that “releases” requests at a fixed interval. It is literally like a buffer. You can fully control the rate of leaking and size of bucket.

Pros:

Request steadily flow into our system which solves the bursting issue in the token bucket algorithm.

It gives a strong back pressure by rejecting any requests once the bucket is full.

Simple to undertand and implement

Cons:

Increased response time as requests are getting processed in a steady flow

Anti burst, this was a pro but can be a con depending on the use case.

Fixed Window Counter

Now we move on to a completely different analogy: this one is all about time. Imagine a window of fixed length — say one minute, from 1:00 to 1:59. Within that window, only a certain number of requests are allowed through, let’s say 100. Once the cap is reached, any additional requests during that same window are rejected outright. When the next window starts (2:00 to 2:59), the counter resets, and the process repeats.

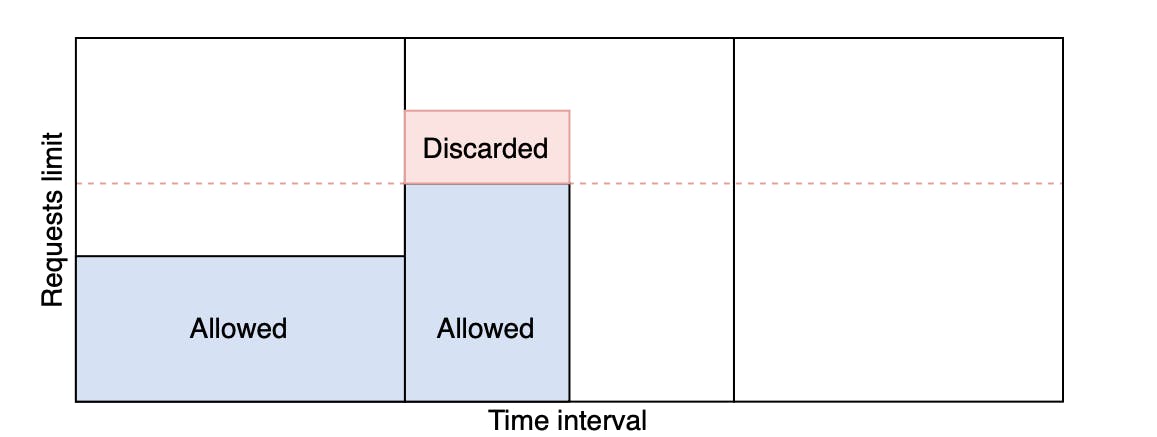

As we can see in the image above once the requests exceed the dotted line they get discarded.

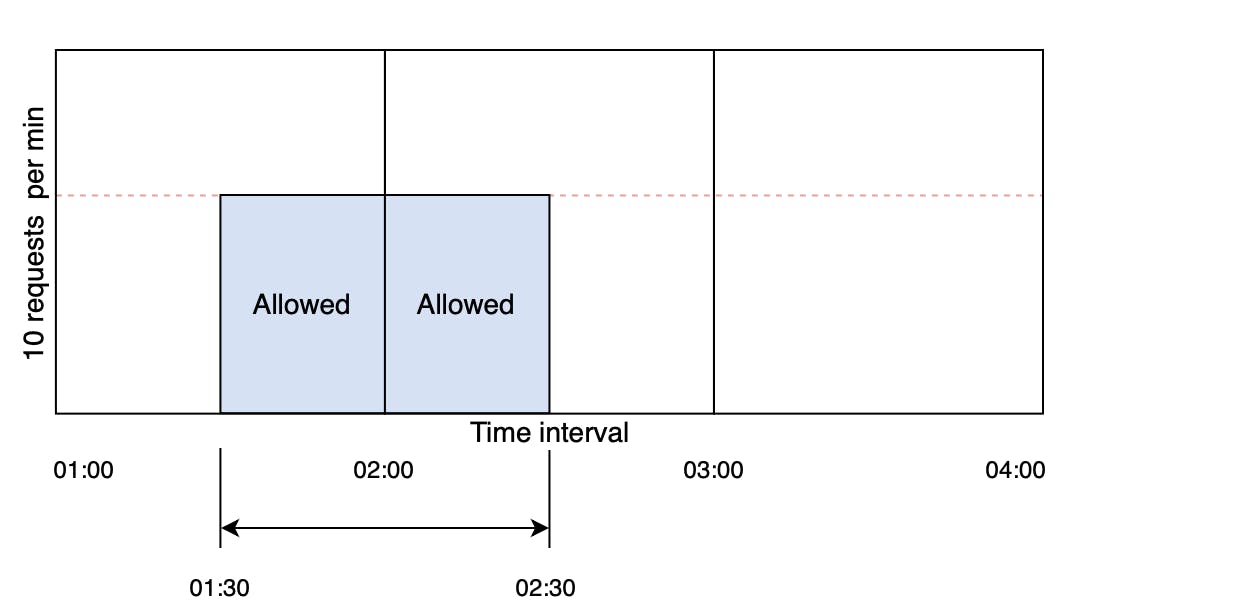

However, this algorithm has one major disadvantage; if we look at the image below

The main flaw of the fixed window approach shows up when requests cluster around the boundary. For example:

Between 1:30 and 2:00, a client sends 100 requests (the max).

Then between 2:00 and 2:30, they send another 100.

Both windows independently look fine — each one respects the “100 requests per minute” limit.

But if you look at the overlapping interval 1:30–2:30 (which is still one minute long), the client actually made 200 requests. This breaks the intended rate limit and can overload your system.

Pros:

Easiest of all rate-limit algorithms to implement (just a counter and a reset timer).

No need to store request timestamps or scan logs.

Easy to communicate (“100 requests per minute”) and easy for clients to reason about.

Cons:

Requests clustered around window edges can exceed the limit in any rolling interval (e.g., 200 requests in 60 seconds instead of 100).

Bursty clients can exploit reset points, while evenly spaced clients are constrained more strictly.

Sliding Window Log

This algorithm fixes the problem with the previous one. Instead of counting requests inside aligned windows (e.g., 2:00–2:59), we keep a timestamped log of each accepted request.

Let’s say we set a rule: maximum 5 requests per 10 seconds. Instead of fixed one-minute blocks, this window is always moving forward in time.

Each time a new request arrives, we do two things:

Prune old requests → remove any logged timestamps that are older than

now − 10 seconds.Count the remaining requests → this gives us how many requests were made in the current rolling window.

If the count is still below 5, the new request is allowed and added to the log. If the count is already at 5, the new request is rejected.

In this way, the algorithm enforces the rule across any 10-second span, not just neat boundaries like 0–10 or 10–20.

Pros:

Enforces “no more than N requests in the last W seconds” with no fixed-window edge cases.

Boundary exploits (end-of-window spikes) don’t slip through.

Cons:

Memory heavy as it stores one timestamp per accepted request (hot clients = big logs).

CPU overhead where it must prune old timestamps on every request.

Heavy per-client load can bottleneck a single store key.

Sliding Window Counter

Think of it as a compromise between Fixed Window (too sloppy) and Sliding Log (too heavy).

Instead of tracking the whole window which can be heavy (store a lot of logs for 60 second window for example)

So we split it into buckets, so a 60 second window will have 60 buckets each of 1 second.

For example if we require maximum of 10 requests per 60 seconds;

At 12:00:00–12:00:01, we got 7 requests (in bucket A).

At 12:00:01–12:00:02, we already have 6 requests so far (in bucket B).

A new request comes in at 12:00:01.5 (halfway into bucket B).

And we want to know: “How many requests happened in the last 60s?”

In the Sliding Window Counter, we look at all the buckets that overlap with the rolling window.

Buckets that are fully inside the window are counted with a weight of 1.0 (their full value).

Buckets that are only partially inside the window — usually just the oldest and the newest — are counted with a fractional weight equal to how much of them overlaps with the window.

So if it overlapped 4 buckets 2 of them being fully inside the window; the calculation is as follows:

1.0 * (fully inside bucket A request count)

+ 1.0 * (fully inside bucket B request count)

+ oldest fraction with window * (oldest bukcet request count)

+ newest fraction with window * (newest bucket request count)

If this total sum is less than the threshold (10 requests for 60 seconds) we allow it otherwise we reject.

At first glance, the Sliding Window Counter sounds neat — split time into buckets, weight the edges, sum them all up. But think about it: if your window is 60 seconds with 1-second buckets, that’s 60 buckets to check on every request. Bump that to a 5-minute window with millisecond precision and you’re suddenly tracking 300,000 buckets. Looking at every single bucket quickly becomes wasteful. The math is overkill, and the per-request overhead will crush performance at scale.

In practice, we don’t need to scan all the buckets. Notice that:

Middle buckets are either fully inside or fully outside the window → no weighting needed.

Only the oldest and newest buckets overlap partially and require fractional weights.

So instead of summing hundreds (or thousands) of buckets, we can keep:

A running total of all requests.

Adjust only the two edge buckets for overlap.

This way, each request check is O(1) — constant time regardless of how long your window is or how fine-grained your buckets are.

Pros:

Much lighter than Sliding Log no per-request timestamps, just bucket counters.

Fairer than Fixed Window smooths out boundary spikes by blending across buckets.

O(1) runtime per request, scales very well

Cons:

Approximate, not exact as counts are close but not perfect (depending on bucket size).

Complexity as implementation is trickier than Fixed Window or Token Bucket.

Summary

Rate limiting is essential in modern applications, and the algorithm you choose should depend on your business model and what you expect your limiter to achieve. Whether you need strict fairness, lightweight performance, or burst tolerance, there’s a strategy that fits. Choose wisely — and happy coding!